Applied Sciences | Free Full-Text | Inter-Rater Variability in the Evaluation of Lung Ultrasound in Videos Acquired from COVID-19 Patients

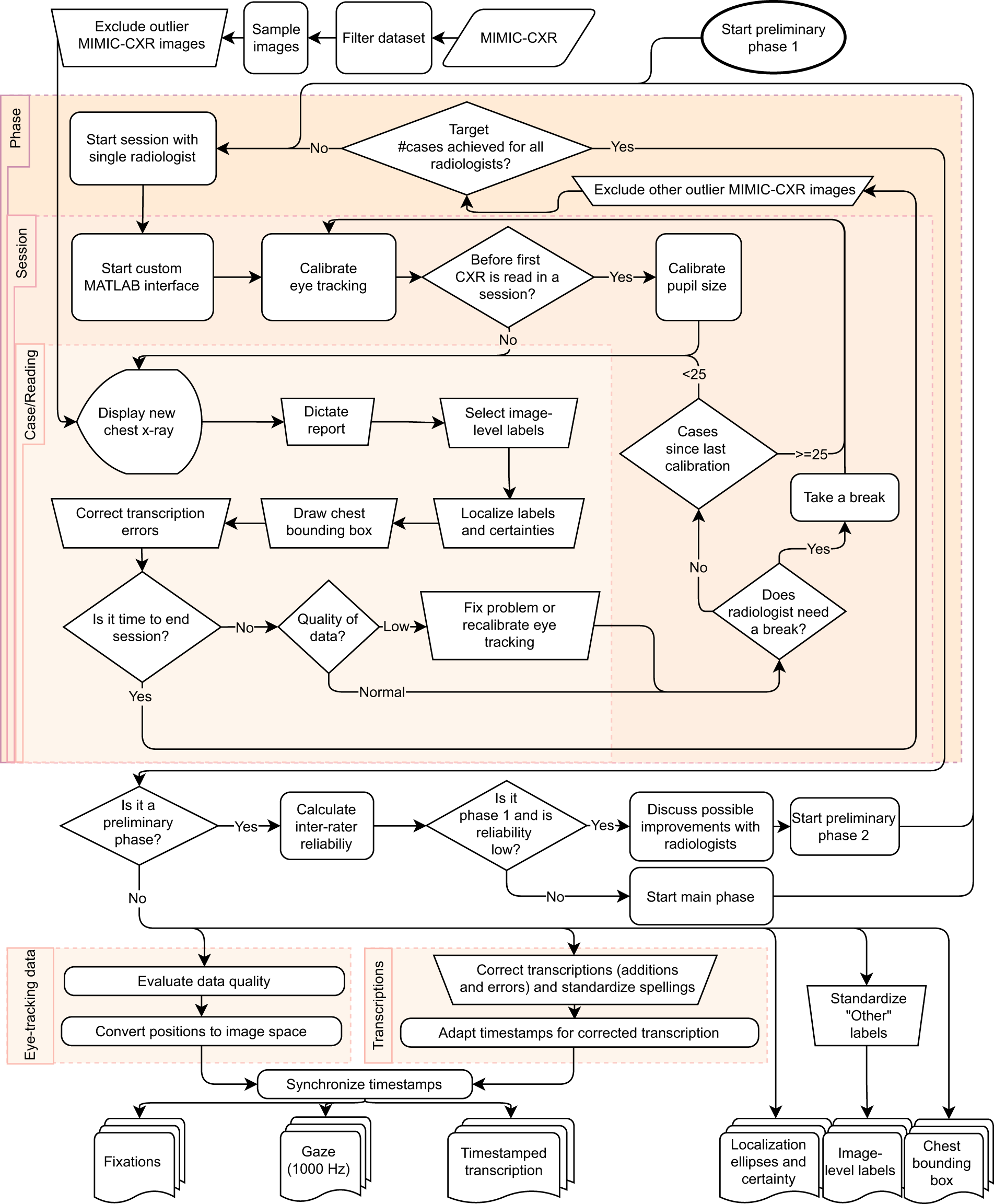

REFLACX, a dataset of reports and eye-tracking data for localization of abnormalities in chest x-rays | Scientific Data

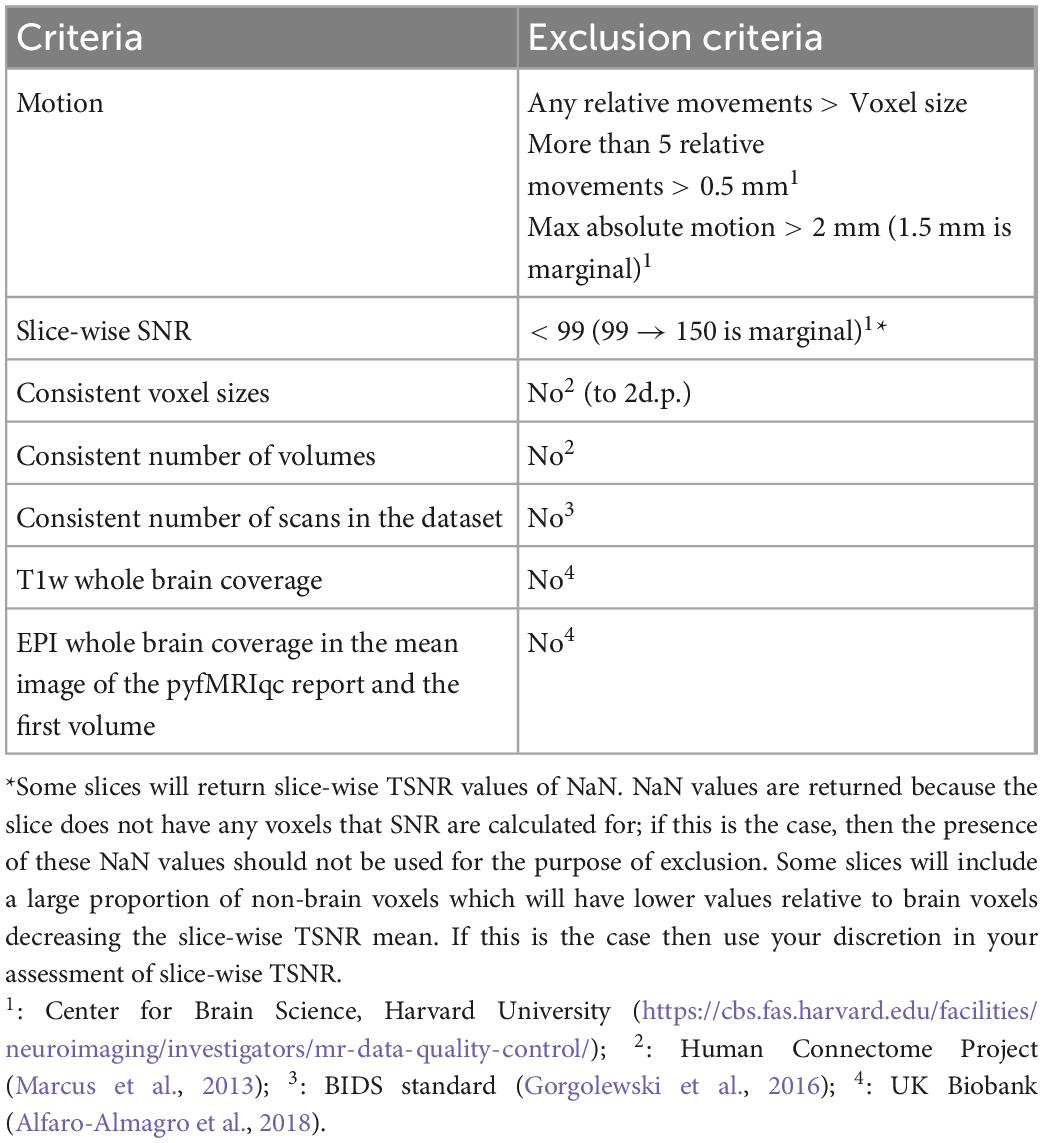

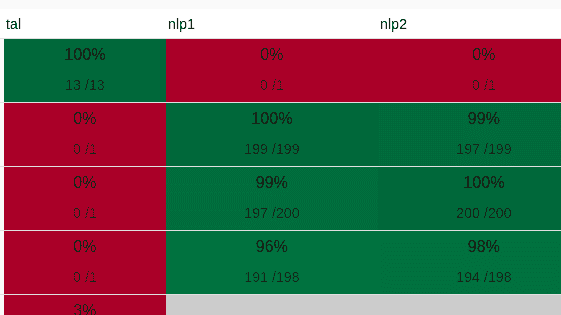

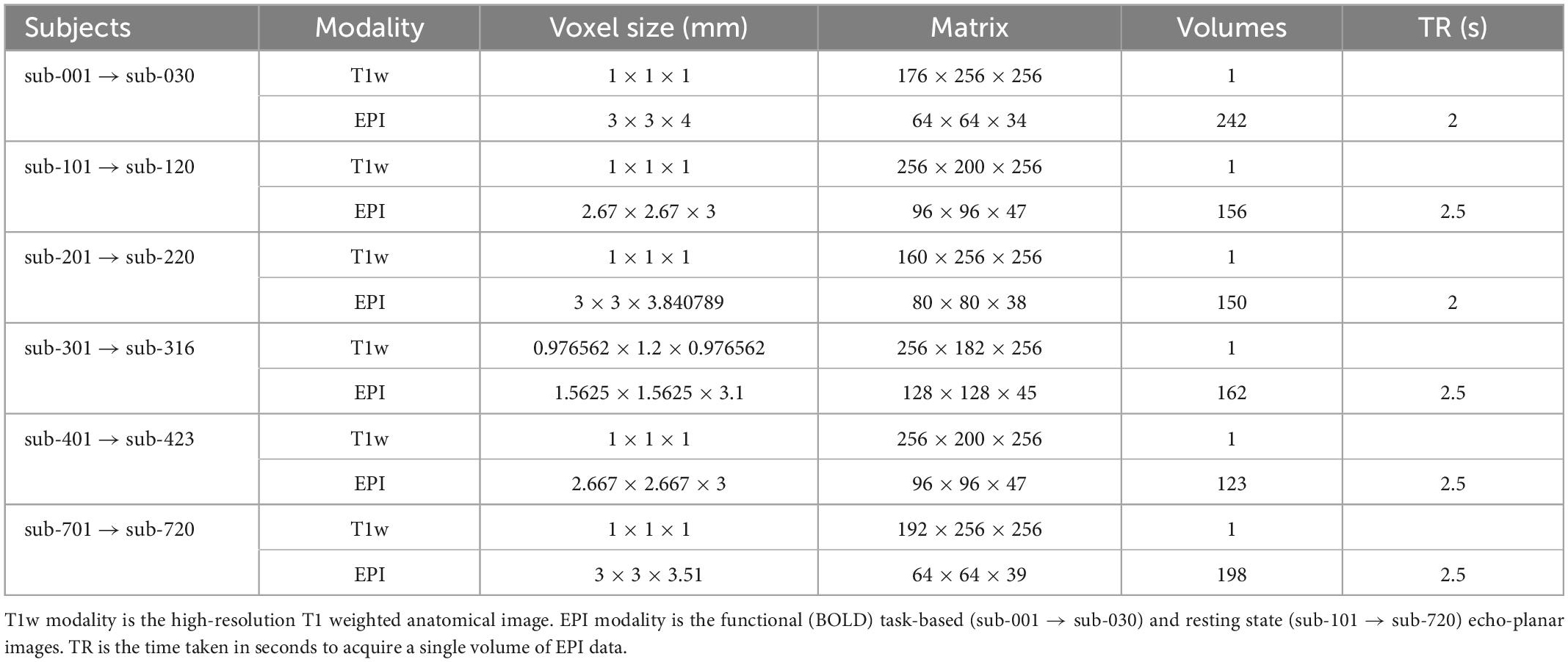

Frontiers | Inter-rater reliability of functional MRI data quality control assessments: A standardised protocol and practical guide using pyfMRIqc

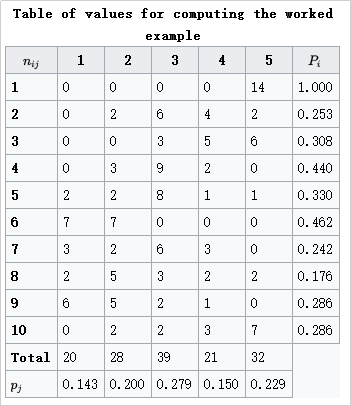

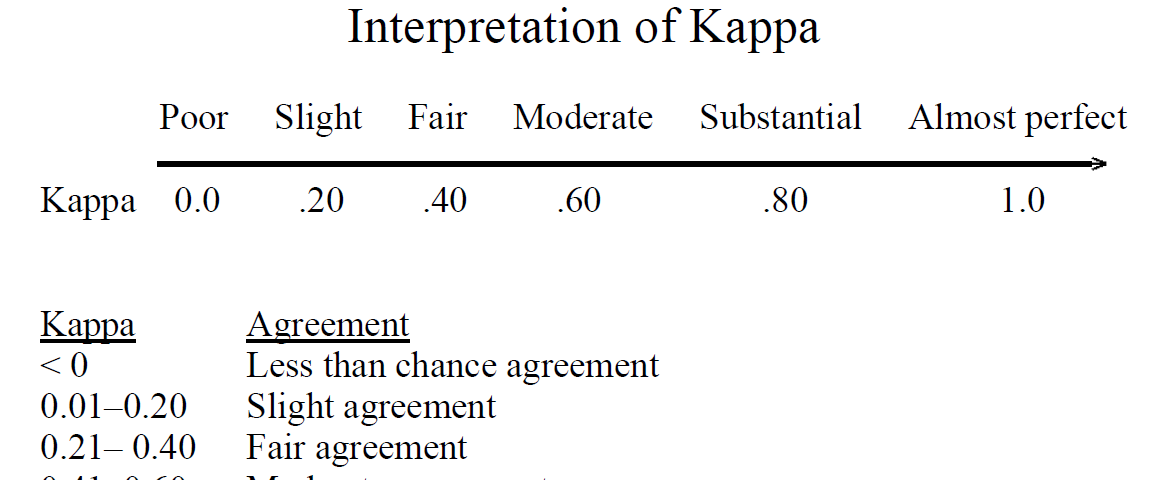

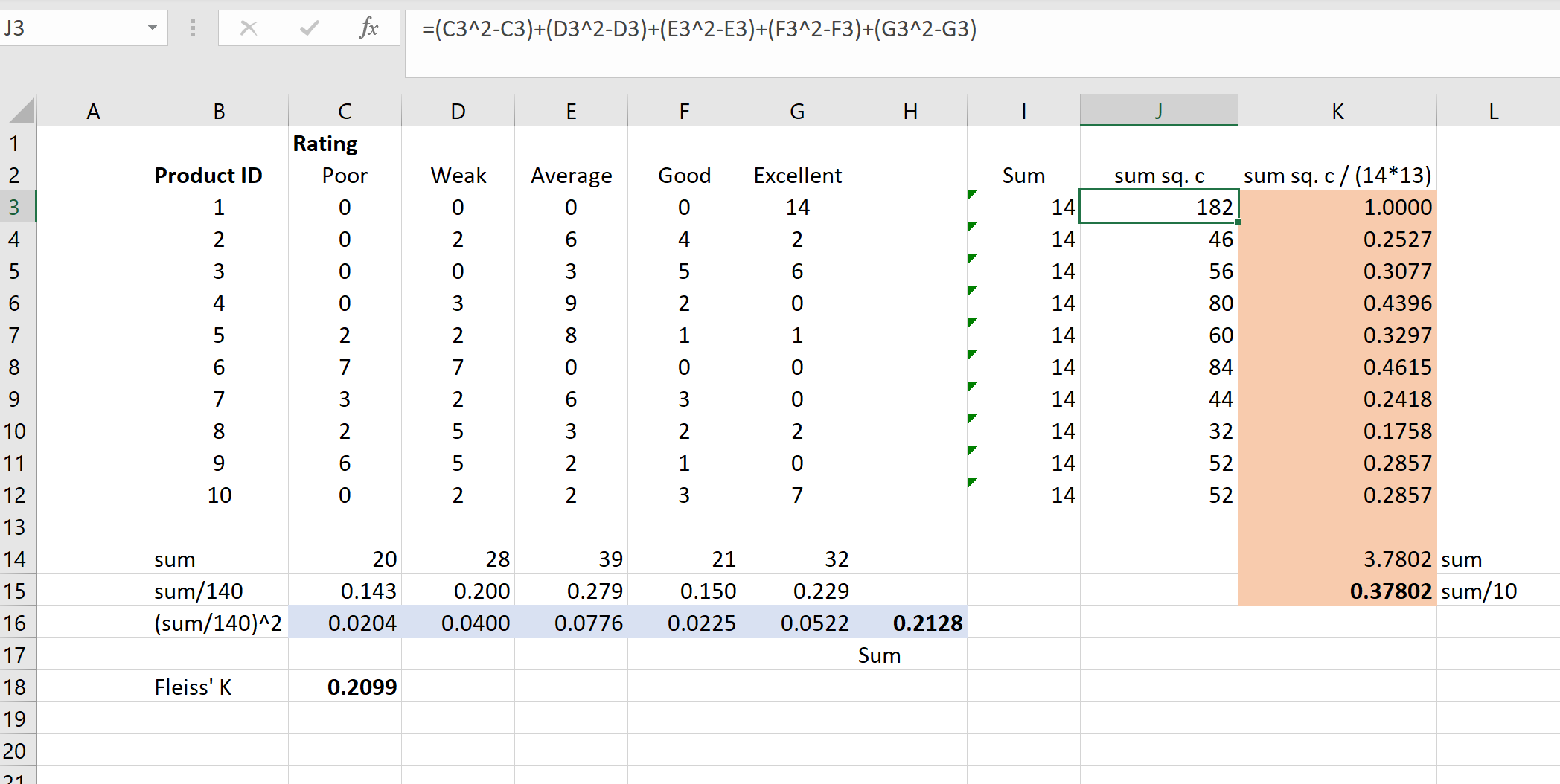

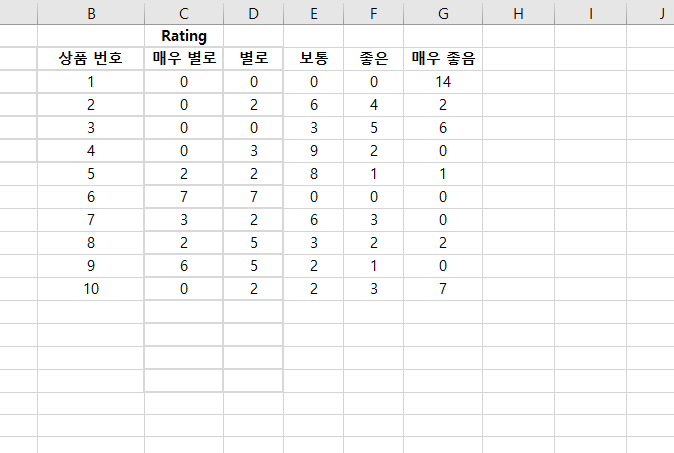

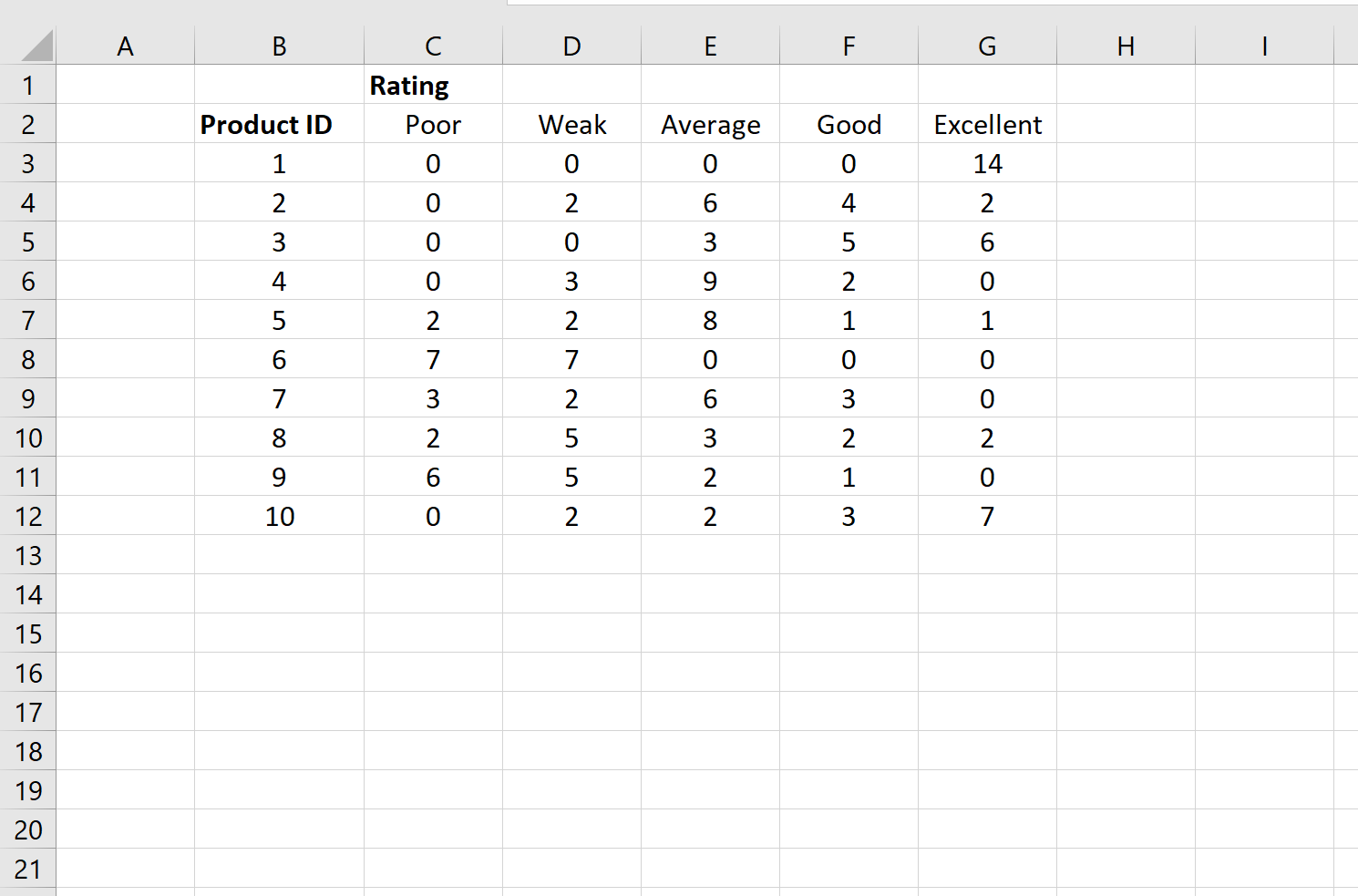

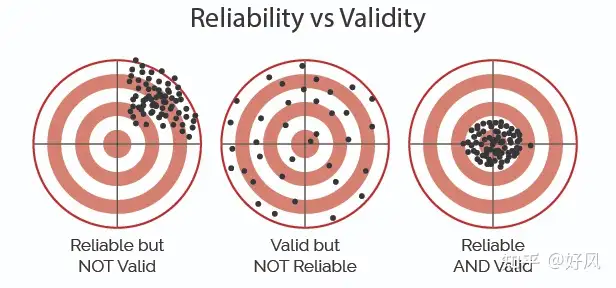

python - Is fleiss kappa a reliable measure for interannotator agreement? The following results confuses me, are there any involved assumptions while using it? - Stack Overflow

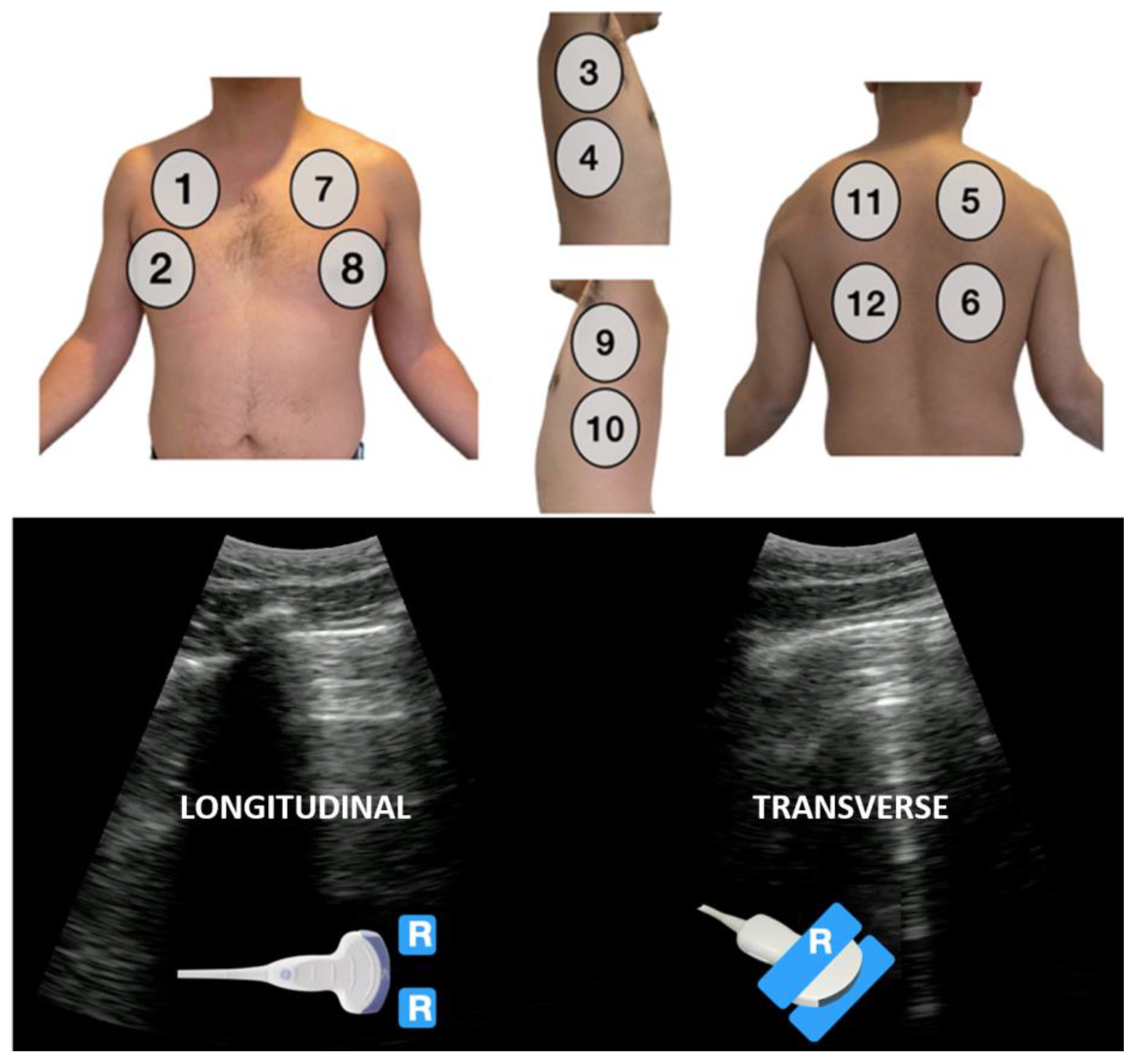

Applied Sciences | Free Full-Text | Inter-Rater Variability in the Evaluation of Lung Ultrasound in Videos Acquired from COVID-19 Patients

GitHub - djarenas/Inter-Rater: Inter-rater quantifies the reliability between multiple raters who evaluate a group of subjects. It calculates the group quantity, Fleiss kappa, and it improves on existing software by keeping information